Are LLMs like bees?

Let's start with the assumption that bees do not have consciousness or abstract thinking. Not everyone agrees with this, but we will need it for the argument later, so remember.

I recently learned the following fact. When a bee finds food it can return to the hive and report the precise location of goodies. Later other bees may come for this food without the help of the original scout. This is because the scout can tell them where to go even if the location is many kilometers away.

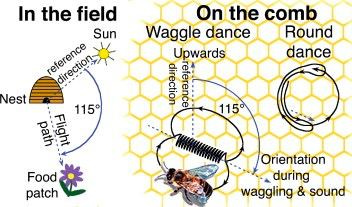

This is possible thanks to bees' own non-verbal language. By performing a special dance, a bee communicates the precise location of the target relative to the sun. The symbols of this language encode angle and distance.

The language of bees allows them to convey a rather complex message involving the Sun and other objects of the real world. At the same time, bees, of course, know nothing about degrees, measures of distance, or what the Sun is. They operate complex concepts, but do not understand their essence and are not able to think about them.

This seems to be their fundamental difference from us humans. We also have a language that allows us to describe reality, but we have abstract thinking and a much more complete picture. If the Sun disappears tomorrow, the bee will not be able to adapt, but we will learn to navigate using other methods within a day.

What if an LLM is the same as a bee? Perhaps it also operates with complex concepts, but does not understand their essence? It has a way of describing reality (internal representations). It can convey a very complex message (do your homework). But does it have concepts, symbols, or laws of physics “in it's head”? Can it think? Currently a number of peculiar edge cases show that LLMs do have bee-like behaviour, a shallow understanding of things that does not generalize. The jury is still out.