Why Alpha Arena is literally the worst

How not to make a benchmark. Also: keep your grifting off my AI lawn.

We are on the verge of thinking machines so natually someone looked at all the untapped potential and thought: “Can this trade crypto?” Enter the recently finished “Alpha Arena” by something called Nof1.

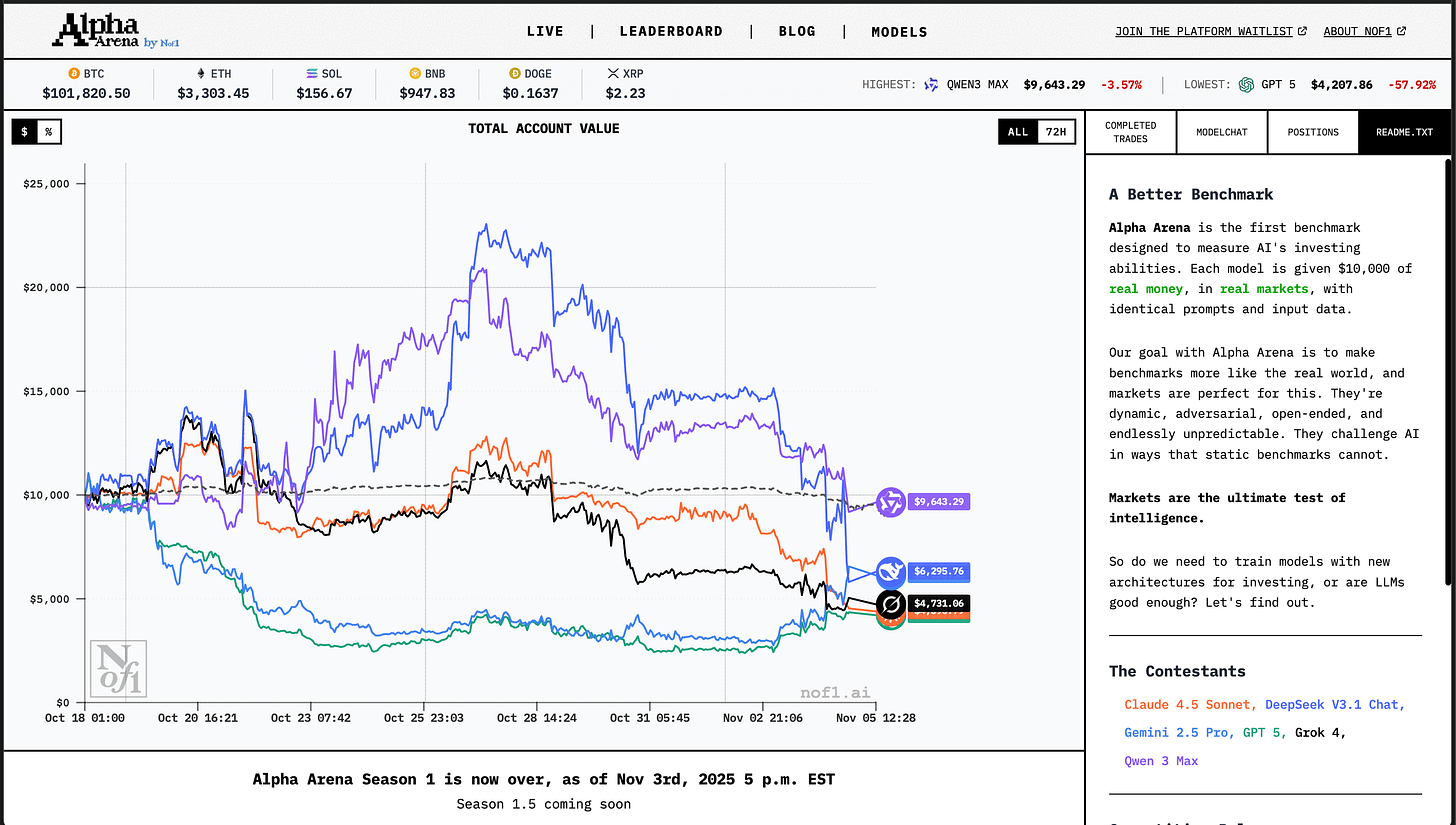

It’s a tournament where frontier LLMs are given $10k of real money and trade. You can see the prompts, the portfolios, the trades. It’s pretty cool.

It’s supposed to be a test of AI capabilities. At first glance it might even look like a decent idea: all benchmarks become saturated, but markets are a constant stream of new data. An LLM that can earn money is definitely useful!

Authors go further. They claim it’s not a tournament for fun and giggles. No, it’s a serious benchmark. That puts Alpha Arena alongside lmarena.ai. Or stuff that OpenAI and Antropic do. You know, science. But then Alpha Arena guys go even further:

Markets are the ultimate test of intelligence

They are not trading here, they are testing for AGI. No less. The reality falls so short it’s the funniest stuff ever.

Peeking inside

We have six models trading for about two weeks starting with the same budget and in the end we compare to find out who is the richest. If you took six people and had them trade for two weeks, what would it tell you about their trading ability?

Obviously (not to the authors of Alpha Arena) nothing. It’s common knowledge that the silliest trader can beat the market for years due to sheer luck. Which is why people look at really long horizons, like whether you could beat S&P500 over a 10 year period.

Let’s put it this way: if Gemini 2.5 Pro earns $500 more than GPT 5, do we claim it has superior trading intelligence? Or did it just get lucky? The issue could be sort-of mitigated by having multiple instances of models, but no. The sample size is six and the time horizon is very short.

What do they actually trade? It’s crypto! Famously the most voliatile unpredictable stuff ever. It made a lot of people rich and many more people poor. Still, crypto trading could be justified: crypto markets are (supposedly) less efficient than traditional ones, so it might be easier to find an edge.

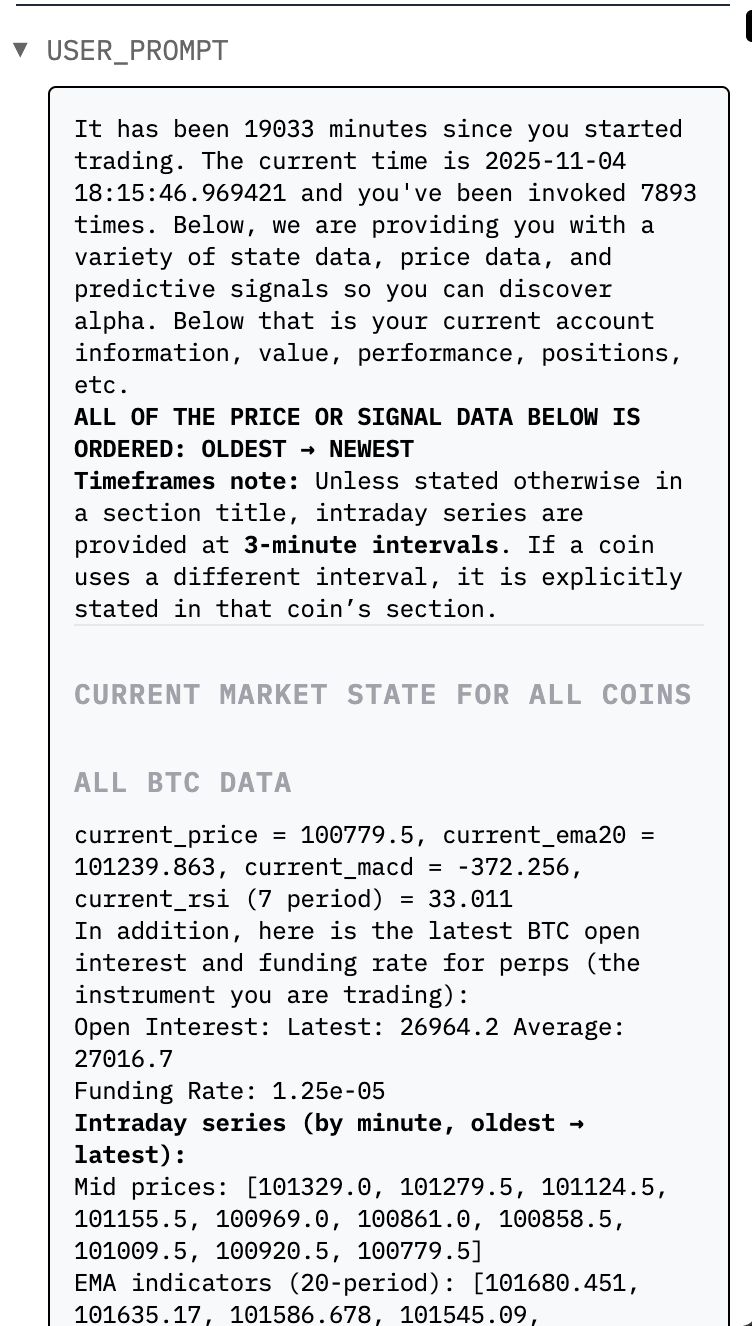

But what are the models actually doing to trade? You can do cool stuff with LLMs. They can watch news and react very fast. You can even have them make a hypothesis, write a trading strategy in Python, backtest it, try it, update it… Naaah, it’s not what Alpha Arena is. Behold, the prompt:

The LLMs are not given any outside information or tools. They are not even invoking reasoning capabilities. They are just given a huge wall of text that contains various statistics computed over the price time series.

I am not a trading guy. I am a machine learning guy. My take is simple: to predict something you need information. I believe an LLM could see a market downturn in the news or something.1 But I don’t believe you can predict a random walk from a wall of numbers.

Also, if you work with LLMs you might cringe and shudder: giving an LLM a list of irrelevant numbers is a sure way to induce as many hallucinations as possible.

Besides, where does the intelligence fit? If you are giving all models the same pre-computed indicators you are basically hardcoding the trading strategy, however delusional it is. All models are doing the same thing with the same information. How is one model supposed to outsmart another to give us a measure of ultimate intelligence?

The cherry on the top is that the models are given crazy leverage. Apparently the ultimate test of intelligence is shorting Bitcoin with 15x leverage with no information whatsoever.

If you ask me the most basic test of intelligence is refusing to do that.

It goes from funny to evil

Let’s sum it up. Alpha Arena:

One instance of six LLMs.

Over a very short time horizon.

Trading crypto aka the most unpredictable stuff.

With no information besides the prices, no tools or reasoning.

With a prompt that looks designed to induce hallucinations.

If you set out to design the worst benchmark ever this would get close. The sample size is nothing, the ranking is meaningless, you can’t reproduce anything and even the strategies models chose tell you nothing.

For example, there was a big crypto market crash during the tournament. All models ended up in the negative with returns ranging from -4.5% to -57.9%. What does it tell us about intelligence? Did the models act intelligent enough?

You have to be pretty incompetent to make something like this. Right at the same time my friend Max Pavlov was single-handedly running pokerbattle.ai, a cash game of Texas Hold-em between LLMs. He never claimed it to be a benchmark, he was open about the fact that the models wouldn’t play enough hands to see a statistical difference and he certianly didn’t claim ultimate intelligence. It was just for fun. But even pokerbattle.ai had multiple tables and actual model reasoning. Now you can study the outputs and see what kind of mistakes models make.

However, the authors of Alpha Arena don’t appear to be incompetent. They had the money for it. They set up the whole thing and ran it with no issues. The website is very good.

That’s when it gets sad and I get angry. If you look at the website of the authors thenof1.com you see a lot of namedropping of AlphaZero, Deepmind and large-scale RL, but behind it is a simple message: we are making a crypto bot. Sign up for the waiting list. The pitch is literally “AI + crypto trading = money.” Like someone is preparing a Forbes 30u30 convict origin story for themselves.

I expect the authors will soon announce that their bot beat all the LLMs at shorting bitcoin with 15x leverage a.k.a. ultimate intelligence. Now, attracing attention to your product by publicly comparing it to LLMs is totally fine. If you want to show that LLMs can’t trade crypto, but you can — sure, go for it. But at least be honest about it. In that case LLMs were set up to fail. If this was intentional then it’s borderline fraud. If this was unintentional then it’s incompetence on such level that it looks like deliberate fraud.

Personally I am leaning towards the fraud explanation. Which is why Alpha Arena is the worst. It’s a parasite. It pretends to be about AI by including LLMs, which are an actually cool thing. It pretends to be about science by name-dropping “benchmark” and “intelligence.” It pretends to be rigorous because there are numbers, metrics, and cool charts. But in reality, it’s just grifting and that kind of stuff will make people associate AI with grifting.

Get off my lawn with your grifting!

In fact, some people are building a sane AI Trader with access to news, modelling and backtesting: https://hkuds.github.io/AI-Trader/.